Aws postgresql 1011/4/2022

It is implemented by streaming the write-ahead-log (WAL) records in binary format to the replica, which then get applied byte-by-byte i.e. The traditional mechanism in PostgreSQL for keeping standby/replica nodes in-sync with the primary is physical replication. It took ~27 hours! SELECT coalesce((octet_length(c1::text)) / (1024.0), 0) AS size FROM t1 ORDER BY size DESC LIMIT 1 Logical replication pluginīefore getting into which logical replication plugin to use, let’s look under the hood and understand logical decoding. To ensure it doesn’t impact normal db operations, we temporarily provisioned a hidden replica just for running it.

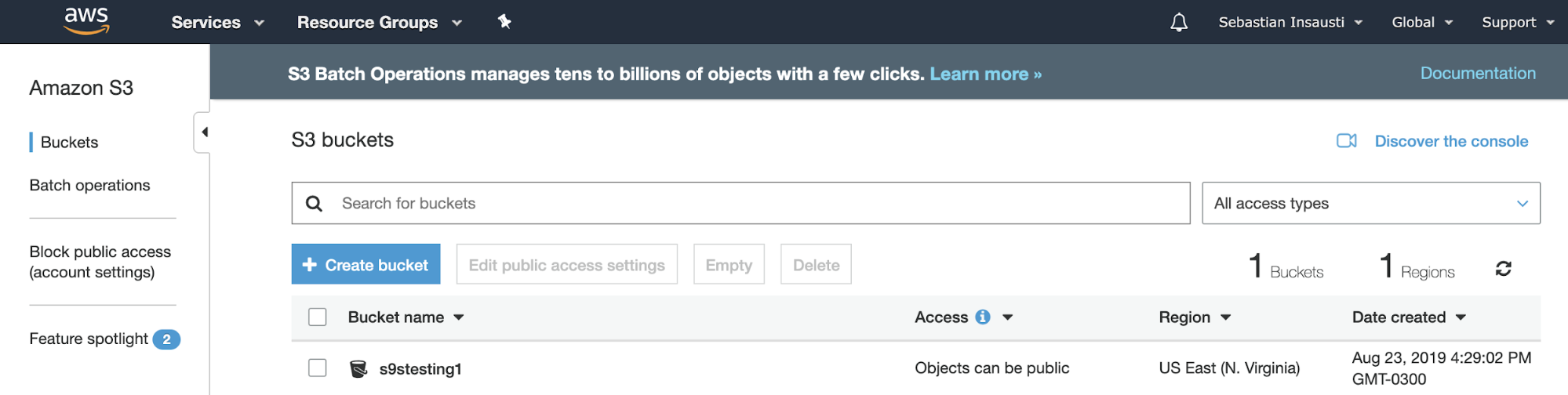

Warning! This query can be very expensive on a large db. We based this limit on the max size of the json columns that existed in our db using the following query: If the data exceeds the specified size limit, then it gets truncated, so beware of using it with json types. This can be worked around by choosing “Limited LOB mode” when creating the task, which allows you to set a known limit which is applicable to all the LOBs in the db. The problem with such columns is that they can potentially contain unbounded data and hence DMS has no clue about how much memory to pre-allocate for them during migration. For homogeneous migration (i.e both source and target dbs are same), partially supported data types are mostly what DMS calls “Large Objects” (LOBs). If you use json data type in your db, you may come across this case when migrating from PG to PG. Partial support on the other hand means DMS can migrate these data types but only under certain conditions. If that’s a deal breaker then you may need to evaluate other options for migration. If you are using data types which are not supported, then those columns won’t get migrated. You can even create dummy endpoints and tasks just for this purpose - even a source db with just the schema loaded should be sufficient. For that, make sure you check the “Pre-migration assessment” option when starting the task. One good thing is that databases can be checked beforehand for compatibility so that there are no surprises during migration. tsvector whereas others that are supported partially eg. There are some pg data types which are not supported at all eg. There are certain limitations about which data/column types are supported. Refer AWS documentation for complete list of prerequisites. For PostgreSQL, the server version needs to be 9.4+ and pglogical extension needs to be installed on both source and target clusters.you can’t have source and target both outside AWS. Either one of the source or target endpoints should be on AWS i.e.There are a few prerequisites for using DMS, mainly: The following diagram illustrates the replication process: It also provides HA/Resiliency with Multi-AZ replication instances. You can perform one-time migrations (full load), and/or replicate ongoing changes (CDC) to keep sources and targets in sync. You may also create the target tables beforehand yourself, if you prefer. DMS creates the tables and associated primary keys if they don’t exist on the target. Then you schedule a task that runs on this server to move your data. You create source and target connections to tell where to extract data from and load to. Using DMSĪt a basic level, DMS provides a server in the AWS cloud that runs replication software. It can be used to migrate data into the AWS Cloud or between combinations of cloud and on-premises setups.Īfter evaluating all the options we ended up choosing DMS. Consistency can be impacted if infra is not optimized correctly.ĭata migration service (DMS) is an AWS cloud service that makes it easy to migrate relational databases, data warehouses, NoSQL databases, and other types of data stores.No support for replicating schema changes (DDLs).Performance and maintenance overhead on source db.It’s an asynchronous PostgreSQL replication system which allows multi-source and multi-target operations. Also, there are some restrictions if there are large objects in the db.Īs we had a couple of very large databases which couldn’t afford a long write downtime, this approach was out of question for us. PostgreSQL comes with the pg_dump and pg_restore scripts which can be used to copy data between clusters in a clean way but it may involve significant downtime for large databases. Options for PG migration pg_dump/pg_restore We have added PG master and one standby as PGPool backends, where read-write requests are served by the master node and read-only requests are served by the standby node. This diagram shows our setup where the applications connect to pgpool nodes via HAProxy.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed